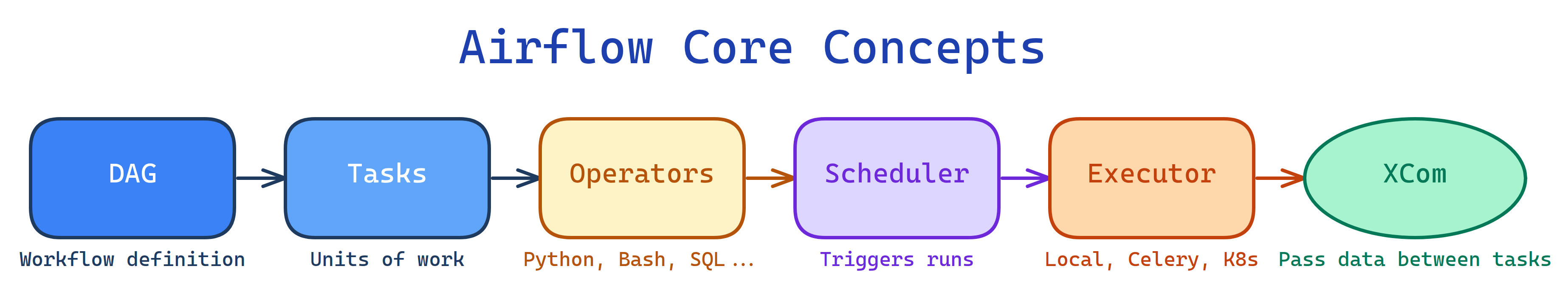

Core Concepts of Airflow

Airflow has 6 key pieces: DAGs (workflow definition), Tasks (units of work), Operators (task templates), Scheduler (triggers runs), Executor (runs tasks), and XComs (pass data between tasks). The Scheduler reads your DAG, creates Task Instances, and the Executor runs them.

The Big Picture

Explain Like I'm 12

DAG = the recipe (steps + order). Task = one step in the recipe ("chop onions"). Operator = the type of step (chopping, boiling, baking). Scheduler = the kitchen timer that says "start cooking at 6pm." Executor = the stove/oven where cooking actually happens. XCom = passing a bowl of chopped onions from one step to the next.

Cheat Sheet

| Concept | What It Does | Analogy |

|---|---|---|

| DAG | Defines workflow: tasks + dependencies + schedule | Recipe with steps in order |

| Task | A single unit of work in a DAG | One step: "chop onions" |

| Operator | Template for a type of work | Type of kitchen action |

| Scheduler | Monitors DAGs, triggers runs at the right time | Kitchen timer |

| Executor | Determines where/how tasks run | The stove/oven |

| XCom | Passes small data between tasks | Passing a bowl between steps |

The 6 Building Blocks

DAGs — Your Workflow Definition

A DAG (Directed Acyclic Graph) is a Python file that defines what tasks to run, in what order, and on what schedule. "Directed" means tasks flow in one direction. "Acyclic" means no loops.

from airflow import DAG

from airflow.operators.python import PythonOperator

from datetime import datetime

with DAG(

dag_id="my_etl_pipeline",

start_date=datetime(2024, 1, 1),

schedule="@daily", # Run once per day

catchup=False, # Don't backfill past dates

tags=["etl", "production"]

) as dag:

extract = PythonOperator(task_id="extract", python_callable=extract_data)

transform = PythonOperator(task_id="transform", python_callable=transform_data)

load = PythonOperator(task_id="load", python_callable=load_data)

extract >> transform >> load # Set dependenciesstart_date (when scheduling begins), schedule (cron expression or preset like @daily), catchup (backfill missed runs?), max_active_runs (concurrency limit), default_args (shared task settings like retries, owner).

Tasks & Operators

A Task is a node in your DAG. An Operator is the class that defines what that task does.

| Operator | What It Does | Example |

|---|---|---|

PythonOperator | Runs a Python function | Transform data with pandas |

BashOperator | Runs a bash command | dbt run --models staging |

SqlOperator | Executes SQL on a database | INSERT INTO ... SELECT ... |

S3ToRedshiftOperator | Loads S3 data into Redshift | Cloud data warehouse load |

KubernetesPodOperator | Runs a container on K8s | Spark job in a pod |

EmailOperator | Sends an email | Alert on pipeline completion |

Task Dependencies

# Bitshift operators (most common)

extract >> transform >> load

# Set upstream/downstream

transform.set_upstream(extract)

load.set_downstream(transform) # equivalent

# Fan-out / Fan-in

extract >> [transform_a, transform_b] >> loadTask Lifecycle

Scheduler — The Brain

The Scheduler is a long-running process that:

- Parses DAG files from the

dags/folder - Determines which DAG runs need to be created based on schedule

- Checks task dependencies for each DAG run

- Sends ready tasks to the Executor

execution_date (now logical_date) is the start of the data interval, not when the DAG runs. A daily DAG for 2024-01-15 actually runs on 2024-01-16 (at the end of the interval). This trips up everyone at first.

Executors — Where Tasks Run

| Executor | How It Works | Best For |

|---|---|---|

SequentialExecutor | One task at a time (default) | Development/testing only |

LocalExecutor | Parallel tasks on the same machine | Small-medium workloads |

CeleryExecutor | Distributes to Celery workers via message queue | Large-scale, multi-node |

KubernetesExecutor | Spins up a new K8s pod per task | Dynamic scaling, isolation |

XComs — Pass Data Between Tasks

XCom (Cross-Communication) lets tasks share small pieces of data. A task pushes a value, and downstream tasks pull it.

# Push (automatic with return value in TaskFlow API)

@task

def extract():

data = fetch_from_api()

return data # automatically pushed as XCom

# Pull

@task

def transform(data): # automatically pulled

return clean(data)Connections & Variables

Connections store credentials for external systems (database host/port/password, AWS keys, API tokens). Managed in the UI or via environment variables.

Variables are global key-value pairs for configuration (environment name, feature flags, thresholds). Access with Variable.get("key").

Test Yourself

Q: What is the difference between a Task and an Operator?

Q: What does "Directed Acyclic" mean in DAG?

Q: When should you NOT use XCom?

Interview Questions

Q: Explain execution_date. Why does a daily DAG for Jan 15 run on Jan 16?

execution_date (now logical_date) is the start of the data interval, not the run time. A daily DAG processes data FOR a given day. It runs at the END of that interval (start of the next). So data for Jan 15 (00:00-23:59) is processed when the interval closes on Jan 16 at 00:00.Q: What happens when a task fails? How does retry work?

failed. If retries > 0, it moves to up_for_retry, waits retry_delay, then re-queues. After exhausting retries, it stays failed. Downstream tasks are skipped (unless trigger_rule is set differently). You can configure on_failure_callback for alerts (Slack, email).Q: Compare CeleryExecutor vs KubernetesExecutor.

Q: How do you handle dependencies between DAGs (not just tasks)?

TriggerDagRunOperator — one DAG triggers another. (2) ExternalTaskSensor — wait for a task in another DAG to complete. (3) Datasets (Airflow 2.4+) — DAG A produces a dataset, DAG B is triggered when the dataset is updated. Datasets are the modern, preferred approach.