What is Apache Airflow?

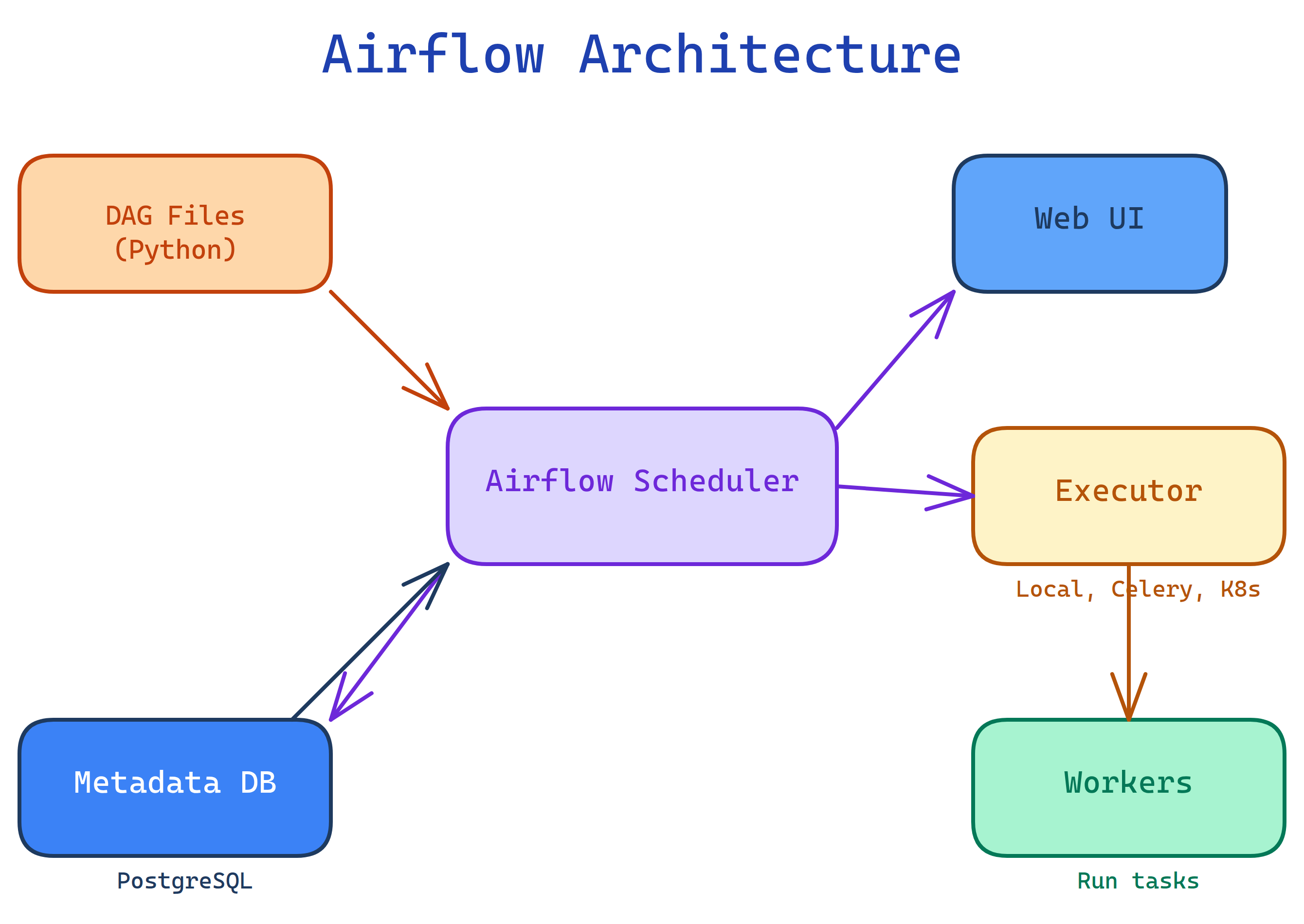

Airflow is an open-source workflow orchestrator. You define your data pipelines as Python code (DAGs), Airflow schedules and runs them, and you monitor everything through a web UI. Think of it as a smart cron job on steroids that knows about dependencies.

The Big Picture

Airflow orchestrates work. You tell it what to do, when to do it, and in what order.

Explain Like I'm 12

Imagine you're the manager of a factory assembly line. You have a clipboard with the order of steps: first cut the wood, then sand it, then paint it, then dry it. Some steps can happen at the same time (cutting two boards). Some must wait (can't paint before sanding).

Airflow is that clipboard — but automatic. You write the steps and their order in Python. Airflow runs them on schedule, watches for failures, retries broken steps, and shows you a dashboard of everything that's happening. You never have to stand over the assembly line again.

What is Airflow?

Apache Airflow was created at Airbnb in 2014 and became an Apache top-level project in 2019. It's the most widely used workflow orchestrator in data engineering.

Key characteristics:

- Workflows as code — DAGs are Python files, so you get version control, code review, and testing for free

- Dependency-aware — tasks run only when their upstream dependencies succeed

- Scheduled — cron-like scheduling with catchup for missed runs

- Extensible — 1000+ pre-built operators for AWS, GCP, Azure, databases, APIs, and more

- Observable — rich web UI with DAG visualizations, logs, and task history

Who is it for?

Data engineers building ETL/ELT pipelines. ML engineers orchestrating training jobs. Analytics engineers scheduling dbt runs. DevOps teams automating infrastructure tasks. Anyone who has outgrown cron jobs and needs visibility into complex, multi-step workflows.

What can Airflow do?

- ETL/ELT pipelines — extract data from APIs, transform in Spark/SQL, load to warehouse

- ML pipelines — train models, validate, deploy to production

- Data quality checks — run tests on data after each load

- Report generation — build and email reports on a schedule

- Infrastructure automation — spin up/down clusters, manage resources

- Cross-system orchestration — coordinate tasks across Spark, Kubernetes, cloud services, databases

Airflow vs Others

| Feature | Airflow | Prefect | Dagster | Cron |

|---|---|---|---|---|

| Language | Python | Python | Python | Shell |

| UI | Rich web UI | Cloud UI | Dagit UI | None |

| Dependencies | DAG-based | Flow-based | Asset-based | None |

| Retries | Built-in | Built-in | Built-in | Manual |

| Ecosystem | Largest (1000+ operators) | Growing | Growing | N/A |

| Best for | Complex pipelines at scale | Modern Python workflows | Data asset management | Simple recurring jobs |

What you'll learn

Test Yourself

What does DAG stand for?

Why is Airflow better than cron for data pipelines?

What language are Airflow DAGs written in?