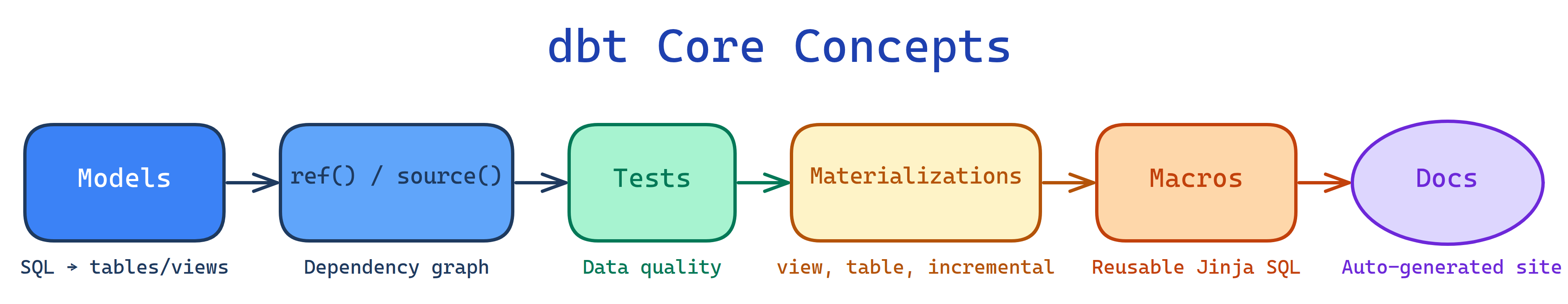

Core Concepts of dbt

dbt has 6 building blocks: Models (SQL files → tables/views), ref() & source() (dependency graph), Tests (data quality), Materializations (how models are built), Macros (reusable Jinja SQL), and Documentation (auto-generated docs site).

The Big Picture

Explain Like I'm 12

Models = recipes (each one makes a dish). ref() = "use the output of that other recipe" (mashed potatoes need the boiled potatoes). Tests = taste-testing at each step. Materializations = do you serve it fresh every time (view) or make a big batch and store it (table)? Macros = that one trick your grandma taught you that works in every recipe. Docs = the cookbook that describes every recipe.

Cheat Sheet

| Concept | What It Does | CLI Command |

|---|---|---|

| Models | SQL SELECT → table or view in warehouse | dbt run |

| ref() / source() | Reference models / raw tables, builds DAG | (used in SQL) |

| Tests | Assert data quality (unique, not_null, etc.) | dbt test |

| Materializations | table, view, incremental, ephemeral | (configured in YAML/SQL) |

| Macros | Reusable Jinja SQL functions | (called in models) |

| Docs | Auto-generated documentation site | dbt docs generate && dbt docs serve |

The 6 Building Blocks

Models — SQL Files That Become Tables

A model is a .sql file containing a single SELECT statement. When you run dbt run, dbt wraps each model in DDL (CREATE TABLE/VIEW) and executes it in the warehouse.

-- models/staging/stg_orders.sql

SELECT

id AS order_id,

user_id AS customer_id,

order_date,

status,

amount

FROM {{ source('raw', 'orders') }}

WHERE status != 'deleted'You write the SELECT. dbt writes the CREATE TABLE AS, handles schema names, and runs models in dependency order.

models/ directory. Organize by layer: models/staging/, models/intermediate/, models/marts/. Each directory can have a _schema.yml file for tests and docs.

ref() & source() — The Dependency Graph

ref() references another dbt model. source() references raw tables loaded by your EL tool.

-- models/marts/fct_orders.sql

-- ref() builds the DAG: this model depends on stg_orders and stg_customers

SELECT

o.order_id,

o.customer_id,

c.customer_name,

o.amount,

o.order_date

FROM {{ ref('stg_orders') }} o

LEFT JOIN {{ ref('stg_customers') }} c ON o.customer_id = c.customer_id# models/staging/_sources.yml

sources:

- name: raw

database: analytics_raw

schema: public

tables:

- name: orders

loaded_at_field: _etl_loaded_at

freshness:

warn_after: {count: 12, period: hour}

error_after: {count: 24, period: hour}ref() and source(). They resolve the correct database/schema, enable lineage tracking, and ensure correct execution order.

Tests — Data Quality Guards

dbt has two types of tests:

Generic Tests (YAML-defined)

# models/staging/_schema.yml

models:

- name: stg_orders

columns:

- name: order_id

tests:

- unique

- not_null

- name: status

tests:

- accepted_values:

values: ['pending', 'shipped', 'delivered', 'returned']

- name: customer_id

tests:

- relationships:

to: ref('stg_customers')

field: customer_idSingular Tests (custom SQL)

-- tests/assert_positive_amounts.sql

-- Returns rows that FAIL the test (should return 0 rows)

SELECT order_id, amount

FROM {{ ref('fct_orders') }}

WHERE amount < 0dbt run: dbt build runs models AND tests in order. A failing test on a staging model blocks downstream marts.

Materializations — How Models Are Built

| Type | What It Creates | Rebuild Cost | Best For |

|---|---|---|---|

| view | SQL view (no stored data) | Instant | Simple transforms, staging models |

| table | Physical table (full rebuild) | Slow on large data | End-user models, complex transforms |

| incremental | Table + only process new rows | Fast (only delta) | Event tables, logs, large fact tables |

| ephemeral | CTE injected into parent model | None (not materialized) | Reusable logic, avoiding intermediate tables |

-- models/marts/fct_events.sql

{{

config(

materialized='incremental',

unique_key='event_id'

)

}}

SELECT * FROM {{ ref('stg_events') }}

{% if is_incremental() %}

WHERE event_timestamp > (SELECT MAX(event_timestamp) FROM {{ this }})

{% endif %}Macros & Jinja — Reusable SQL

Jinja is a templating language mixed into dbt SQL. Macros are reusable Jinja functions.

-- macros/cents_to_dollars.sql

{% macro cents_to_dollars(column_name) %}

({{ column_name }} / 100.0)::numeric(10,2)

{% endmacro %}

-- Usage in a model:

SELECT

order_id,

{{ cents_to_dollars('amount_cents') }} AS amount_dollars

FROM {{ ref('stg_orders') }}packages.yml. Popular: dbt-utils (surrogate keys, pivoting, date spine), dbt-expectations (Great Expectations-style tests), codegen (auto-generate model YAML).

Documentation — Auto-Generated Docs

dbt generates a searchable website from your YAML descriptions with a visual lineage graph.

# models/marts/_schema.yml

models:

- name: fct_orders

description: "One row per order with customer details. Grain: order_id."

columns:

- name: order_id

description: "Primary key from source system"

- name: amount_dollars

description: "Order total in USD (converted from cents)"dbt docs generate # Creates the docs site

dbt docs serve # Opens it in your browserTest Yourself

Q: What is the difference between ref() and source()?

ref() references another dbt model (a .sql file you wrote). source() references a raw table loaded by an external tool (Fivetran, Airbyte). Both build the dependency graph, but source() also enables freshness checks.Q: When would you use an incremental materialization vs a table?

Q: What does dbt build do?

dbt build runs models AND tests in DAG order. If a test fails on a model, downstream models are skipped. It combines dbt run + dbt test + dbt snapshot + dbt seed into one command.Interview Questions

Q: Explain the dbt project structure. What goes where?

models/ — SQL models organized by layer (staging/, intermediate/, marts/). tests/ — singular test SQL files. macros/ — reusable Jinja functions. seeds/ — CSV files loaded as tables. snapshots/ — SCD Type 2 definitions. dbt_project.yml — project config (name, version, materializations). profiles.yml — warehouse connection details (local only, not committed).Q: What is a snapshot in dbt?

dbt_valid_from and dbt_valid_to timestamps. When a row changes, the old version gets an end timestamp and a new version is inserted. Configured with a strategy (timestamp or check) and unique_key.Q: How does dbt handle incremental models? What happens on the first run?

is_incremental() Jinja macro. If the filter logic is wrong, run dbt run --full-refresh to rebuild from scratch. The unique_key config handles upserts (merge/insert-overwrite).