What is dbt?

dbt (data build tool) is the "T" in ELT. Raw data is already loaded into your warehouse (by Fivetran, Airbyte, etc.). dbt transforms it using SQL SELECT statements organized as models, with version control, automated tests, and auto-generated documentation. You write SQL; dbt handles the DDL.

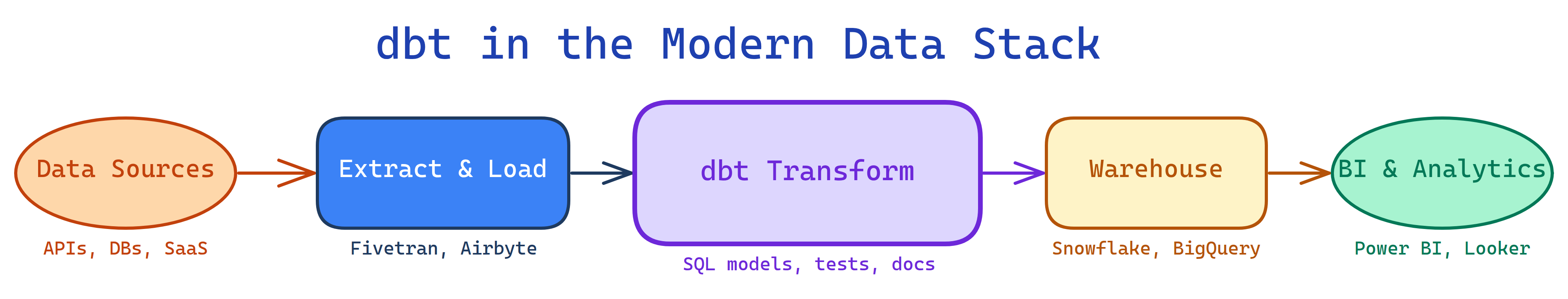

The Big Picture

dbt sits between raw data and analytics-ready tables. It only does transformations — no extraction, no loading.

Explain Like I'm 12

Imagine a factory. Trucks deliver raw materials (that's extraction & loading). dbt is the assembly line inside the factory — it takes raw materials and turns them into finished products. You write instructions (SQL) for each station on the line: "take this steel, cut it here, weld it there." dbt runs the stations in the right order, checks quality at each step, and documents what every station does.

The key insight: dbt doesn't move data in or out. It only transforms data that's already in the warehouse.

What is dbt?

dbt was created by dbt Labs (formerly Fishtown Analytics) in 2016. It popularized the analytics engineering role and the ELT pattern (Extract-Load-Transform), where transformations happen inside the warehouse rather than before loading.

Key principles:

- SQL-first — write SELECT statements, dbt wraps them in CREATE TABLE/VIEW DDL

- Version controlled — models are .sql files in a Git repo

- Tested — built-in tests for uniqueness, not-null, accepted values, relationships

- Documented — auto-generated docs site from YAML descriptions

- Modular — models reference other models with

ref(), building a DAG - DRY — Jinja templating for reusable SQL macros

It comes in two flavors:

Who is it for?

Analytics engineers who own the transformation layer. Data analysts who want to apply software engineering practices to SQL. Data engineers who need testable, documented data pipelines. Anyone building a modern data stack with Snowflake, BigQuery, Redshift, or Databricks.

What can dbt do?

- Transform raw data into clean tables — staging, intermediate, and mart models

- Build a dependency graph (DAG) — models reference each other with

ref() - Test data quality — assert uniqueness, not-null, referential integrity, custom SQL tests

- Generate documentation — a searchable website with lineage graphs from YAML descriptions

- Manage incremental loads — only process new/changed rows instead of full rebuilds

- Create snapshots — track slowly changing dimensions (SCD Type 2)

- Reuse logic with macros — Jinja-templated SQL for DRY patterns

- Run CI/CD — test every PR against the warehouse before merging

dbt vs Others

| Feature | dbt | Stored Procedures | Airflow + SQL | Dataform |

|---|---|---|---|---|

| Language | SQL + Jinja | SQL/PL/pgSQL | Python + SQL | SQLX |

| Version control | Git-native | Manual | Git (DAG code) | Git-native |

| Testing | Built-in | None | Custom | Built-in |

| Documentation | Auto-generated | None | Manual | Auto-generated |

| Lineage | Visual DAG | None | Airflow DAG view | Visual DAG |

| Orchestration | dbt Cloud or external | DB scheduler | Built-in | Built-in |

| Best for | Analytics engineering | Legacy systems | Complex pipelines | BigQuery teams |

What you'll learn

Test Yourself

What does dbt stand for and what does it do?

What is the ref() function and why is it important?

ref('model_name') references another dbt model. It builds the dependency graph (DAG) automatically, handles schema/database name resolution, and ensures models run in the right order.What is the difference between dbt Core and dbt Cloud?