CI/CD Testing Pipelines

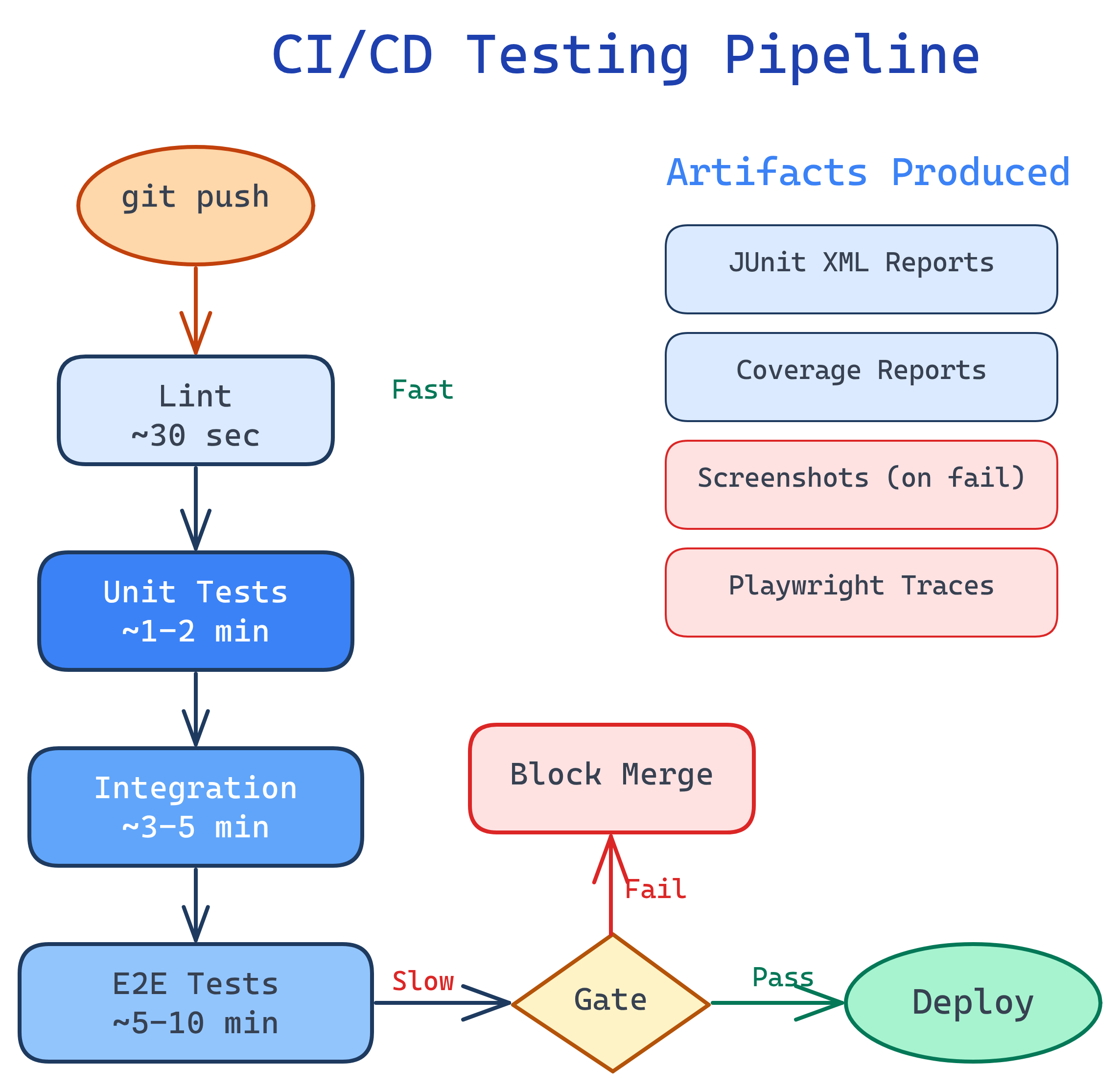

A CI/CD testing pipeline runs your tests automatically on every push. Structure it in stages — lint → unit → integration → E2E — with quality gates that block merges on failure. Use parallel execution to keep it fast and test artifacts to debug failures.

Explain Like I'm 12

Imagine you have a factory that makes toys. Before shipping any toy, it goes through checkpoints:

- Quick look — Does it look right? (lint check)

- Parts check — Does each piece work? (unit tests)

- Assembly check — Do the pieces fit together? (integration tests)

- Play test — Can a kid actually play with it? (E2E tests)

A CI/CD pipeline is that factory line for your code. Every time someone makes a change, the code automatically goes through all these checkpoints. If it fails any checkpoint, it's sent back for fixes before it can ship.

Anatomy of a Test Pipeline

A well-designed test pipeline runs tests in order of speed: fast tests first, slow tests last. If fast tests fail, you skip the slow ones — saving time and compute.

GitHub Actions: Complete Pipeline

Here's a production-ready test pipeline using GitHub Actions. It runs lint, unit, integration, and E2E tests in separate jobs with proper dependencies.

name: Test Pipeline

on:

push:

branches: [main]

pull_request:

branches: [main]

jobs:

lint:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with: { python-version: '3.12' }

- run: pip install ruff

- run: ruff check .

- run: ruff format --check .

unit:

needs: lint

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with: { python-version: '3.12' }

- run: pip install -r requirements-test.txt

- run: pytest tests/unit/ -v --junitxml=unit-results.xml --cov=src --cov-report=xml

- uses: actions/upload-artifact@v4

if: always()

with:

name: unit-results

path: unit-results.xml

integration:

needs: unit

runs-on: ubuntu-latest

services:

postgres:

image: postgres:16

env:

POSTGRES_PASSWORD: testpass

POSTGRES_DB: testdb

ports: ['5432:5432']

options: >-

--health-cmd pg_isready

--health-interval 10s

--health-timeout 5s

--health-retries 5

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with: { python-version: '3.12' }

- run: pip install -r requirements-test.txt

- run: pytest tests/integration/ -v --junitxml=integration-results.xml

env:

DATABASE_URL: postgresql://postgres:testpass@localhost:5432/testdb

e2e:

needs: integration

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with: { python-version: '3.12' }

- run: pip install -r requirements-test.txt

- run: playwright install --with-deps chromium

- run: pytest tests/e2e/ -v --junitxml=e2e-results.xml

- uses: actions/upload-artifact@v4

if: failure()

with:

name: playwright-traces

path: test-results/if: always() on artifact uploads so you get test reports even when tests fail — that's exactly when you need them. Use if: failure() for Playwright traces to save storage on passing runs.Parallel Execution

Slow test suites kill developer productivity. Parallel execution is the most impactful optimization — splitting tests across multiple workers or machines.

pytest-xdist (Python)

# Run tests across 4 CPU cores

pytest tests/ -n 4

# Auto-detect available cores

pytest tests/ -n auto

# Split by file (each worker gets complete test files)

pytest tests/ -n 4 --dist loadfileGitHub Actions Matrix Strategy

# Split E2E tests across 3 parallel machines

e2e:

strategy:

matrix:

shard: [1, 2, 3]

steps:

- uses: actions/checkout@v4

- run: pip install -r requirements-test.txt

- run: playwright install --with-deps chromium

- run: |

pytest tests/e2e/ \

--splits 3 \

--group ${{ matrix.shard }} \

--splitting-algorithm least_durationQuality Gates

Quality gates are automated checkpoints that block code from merging if it doesn't meet quality standards.

| Gate | What It Checks | Typical Threshold |

|---|---|---|

| All tests pass | Zero test failures | 100% pass rate |

| Code coverage | New code is tested | ≥ 80% on changed files |

| No new lint errors | Code style & quality | Zero new violations |

| Performance budget | No performance regressions | < 5% slowdown on benchmarks |

| Security scan | No known vulnerabilities | Zero critical/high CVEs |

# Enforce coverage threshold in pytest

# pytest.ini or pyproject.toml

[tool.pytest.ini_options]

addopts = "--cov=src --cov-fail-under=80"Test Artifacts & Reporting

When tests fail in CI, you need enough information to debug without re-running locally. Upload these artifacts:

- JUnit XML — Standard test result format, supported by all CI tools for summary views

- HTML reports — Human-readable test reports (pytest-html, Allure)

- Screenshots — Captured on failure for E2E tests

- Playwright traces — Full interaction replay with DOM snapshots, network logs, and console output

- Coverage reports — HTML or XML coverage data for tracking trends

# Upload Allure results for beautiful reporting

- run: pytest tests/ --alluredir=allure-results

- uses: actions/upload-artifact@v4

if: always()

with:

name: allure-results

path: allure-results/Caching & Optimization

CI pipelines that install dependencies on every run waste minutes. Caching eliminates this.

# Cache pip dependencies

- uses: actions/setup-python@v5

with: { python-version: '3.12' }

- uses: actions/cache@v4

with:

path: ~/.cache/pip

key: ${{ runner.os }}-pip-${{ hashFiles('requirements*.txt') }}

restore-keys: ${{ runner.os }}-pip-

# Cache Playwright browsers (saves ~1 min)

- uses: actions/cache@v4

with:

path: ~/.cache/ms-playwright

key: playwright-${{ hashFiles('requirements*.txt') }}| Optimization | Time Saved | Effort |

|---|---|---|

| Cache dependencies | 1-3 minutes | Low (add cache action) |

| Parallel test execution | 30-70% of test time | Medium (ensure test isolation) |

| Skip unchanged tests | Variable | Medium (need affected-test detection) |

| Smaller Docker images | 30-60 seconds | Low (use slim base images) |

| Run only relevant test suites | Variable | Medium (path-based triggers) |

hashFiles() in the cache key).Test Yourself

Why should you run unit tests before E2E tests in a CI pipeline?

What's a quality gate and why is it important?

Why must parallel tests be isolated from each other?

What should you upload as CI artifacts when tests fail?

How does caching reduce CI pipeline time?

Interview Questions

Design a CI/CD test strategy for a team shipping a web application with a Python backend and React frontend.

Pipeline stages (in order):

- Lint & Format — ruff (Python) + ESLint/Prettier (JS) — runs in ~30s

- Unit Tests — pytest for backend, Jest for frontend — runs in parallel (~1-2 min)

- Integration Tests — API tests hitting a Postgres service container — (~2-3 min)

- E2E Tests — Playwright testing full user flows in Chrome — (~5-8 min, sharded across 3 workers)

Quality gates: all tests pass + 80% coverage on changed files. Branch protection requires all status checks. Artifacts: JUnit XML, coverage XML to Codecov, Playwright traces on failure.

Your CI pipeline takes 45 minutes to run. How do you cut it to under 15 minutes?

Step 1: Profile — find where time is spent (install? tests? build?).

Step 2: Cache dependencies — typically saves 2-5 min.

Step 3: Parallelize tests — use pytest-xdist or shard across matrix workers. This alone can cut 50-70% of test time.

Step 4: Run independent jobs concurrently — lint, backend tests, and frontend tests don't depend on each other.

Step 5: Skip irrelevant tests — if only backend files changed, skip E2E tests (use path filters).

Step 6: Optimize Docker — use slim images, multi-stage builds, cached layers.

How do you handle flaky tests in a CI pipeline without ignoring real failures?

Strategy: (1) Auto-retry flaky tests with a limit (e.g., retry up to 2 times). If it passes on retry, flag it as flaky but don't block the pipeline. (2) Track flaky rate — any test that requires retries goes on a "flaky list" dashboard. (3) Quarantine threshold — if a test is flaky more than 5% of runs, move it to a non-blocking "quarantine" job and file a ticket. (4) Root cause fix — the quarantine creates pressure to actually fix the underlying issue (usually timing, shared state, or external dependency).