Multi-Agent Systems

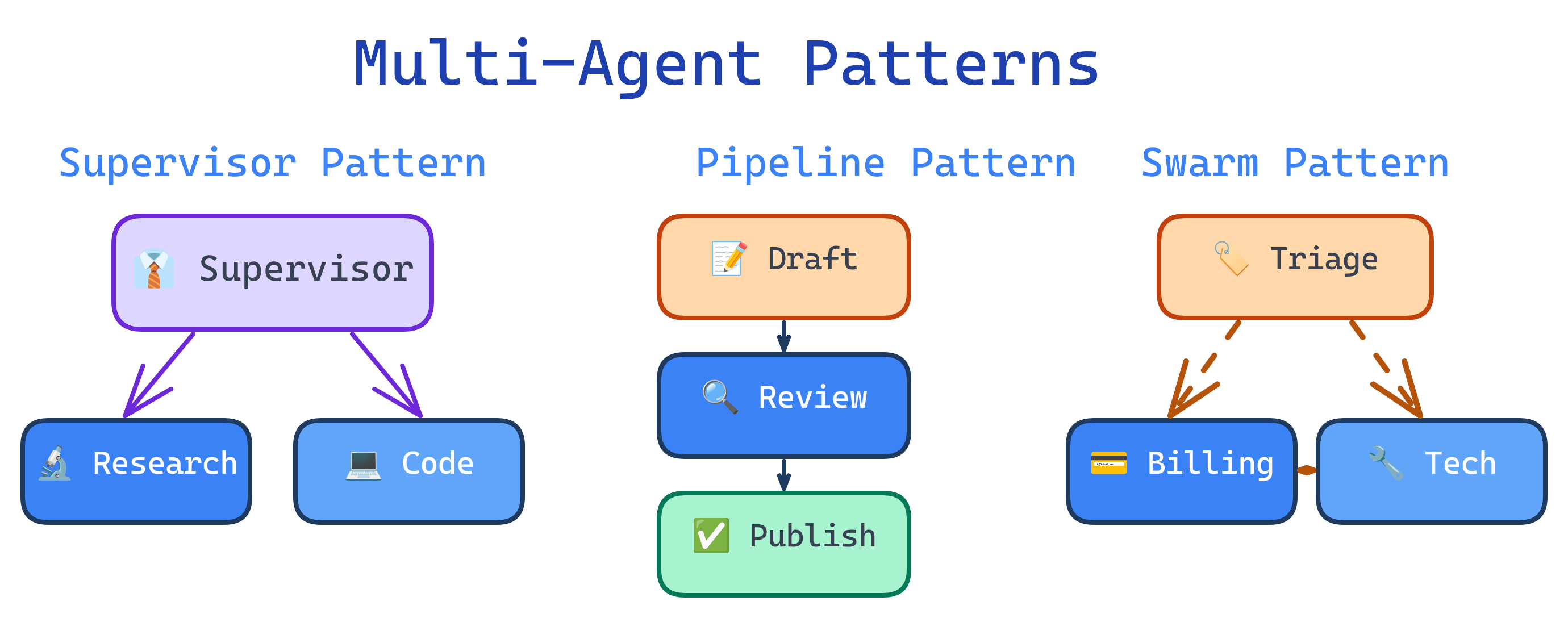

Multi-agent systems use multiple specialized agents working together instead of one monolithic agent. Key patterns: Supervisor (one agent delegates to workers), Debate (agents argue to find better answers), Pipeline (agents process in sequence), and Swarm (dynamic hand-offs between peers). Use multi-agent when tasks need different expertise, parallelism, or quality checks — but start with a single agent first.

Explain Like I'm 12

Imagine you're running a group project at school. You could try to do everything yourself — research, writing, design, and presenting. But it's way better to split the work. One friend does research, another writes the report, another makes the slides. That's a multi-agent system! Each "agent" is a specialist. There's usually a team leader (the supervisor agent) who decides who does what, checks the work, and puts it all together. Sometimes two agents debate a question to make sure the answer is really good — like having two friends argue about whether a fact is right before putting it in the report.

Multi-Agent Patterns

When to Use Multi-Agent

Multi-agent systems add complexity. Only use them when a single agent genuinely isn't enough:

| Use Multi-Agent When... | Stick With Single Agent When... |

|---|---|

| Task requires different expertise (code + design + testing) | One skillset covers the entire task |

| Subtasks can run in parallel for speed | Steps are strictly sequential |

| You need quality checks (reviewer agent) | Output quality is acceptable without review |

| Context window is too small for everything | All context fits in one conversation |

| You want separation of concerns (security) | All operations have the same trust level |

Supervisor Pattern

One supervisor agent receives the task, breaks it into subtasks, delegates to specialized worker agents, collects results, and assembles the final output. This is the most common multi-agent pattern.

# Supervisor pattern: One boss, multiple workers

class SupervisorAgent:

def __init__(self):

self.workers = {

"researcher": ResearchAgent(),

"coder": CodingAgent(),

"reviewer": ReviewAgent(),

}

def run(self, task):

# Plan: Decide which workers to use

plan = self.llm.generate(

f"Break this task into subtasks and assign each to a worker.\n"

f"Available workers: {list(self.workers.keys())}\n"

f"Task: {task}"

)

# plan: [("researcher", "find best practices"), ("coder", "implement"), ("reviewer", "review")]

results = {}

for worker_name, subtask in plan:

worker = self.workers[worker_name]

# Pass relevant context from previous results

result = worker.run(subtask, context=results)

results[worker_name] = result

# Synthesize final output

return self.llm.generate(

f"Combine these results into a final response:\n{results}"

)Debate Pattern

Two or more agents independently answer the same question, then critique each other's answers in rounds. A judge agent (or the same agents) converges on the best answer. This improves accuracy on complex reasoning tasks.

# Debate pattern: Multiple agents argue to find truth

def debate(question, num_rounds=3):

# Generate initial positions

agent_a_answer = agent_a.generate(question)

agent_b_answer = agent_b.generate(question)

for round in range(num_rounds):

# Agent A critiques Agent B

a_critique = agent_a.generate(

f"Question: {question}\n"

f"Your answer: {agent_a_answer}\n"

f"Opponent's answer: {agent_b_answer}\n"

f"Critique their answer and defend or update yours."

)

# Agent B critiques Agent A

b_critique = agent_b.generate(

f"Question: {question}\n"

f"Your answer: {agent_b_answer}\n"

f"Opponent's answer: {agent_a_answer}\n"

f"Critique their answer and defend or update yours."

)

agent_a_answer = a_critique

agent_b_answer = b_critique

# Judge picks the best answer

return judge.generate(

f"Pick the better answer:\nA: {agent_a_answer}\nB: {agent_b_answer}"

)Pipeline Pattern

Agents process work sequentially, like an assembly line. Each agent transforms the output and passes it to the next. Great for workflows with clear stages.

# Pipeline pattern: Sequential processing

pipeline = [

("planner", "Break this feature request into technical tasks"),

("coder", "Implement each task with clean, tested code"),

("reviewer", "Review the code for bugs, security issues, and style"),

("documenter", "Write documentation for the new feature"),

]

def run_pipeline(initial_input):

current_output = initial_input

for agent_name, instruction in pipeline:

agent = get_agent(agent_name)

current_output = agent.run(

f"{instruction}\n\nInput from previous stage:\n{current_output}"

)

return current_outputSwarm Pattern

In a swarm, agents dynamically hand off conversations to each other based on the current need. There's no fixed hierarchy — any agent can transfer control to another. This is ideal for customer-facing workflows where the topic shifts.

# Swarm pattern: Dynamic hand-offs between peers

agents = {

"triage": TriageAgent(), # Classifies the request

"billing": BillingAgent(), # Handles billing questions

"technical": TechnicalAgent(), # Handles tech support

"escalation": HumanAgent(), # Escalates to human

}

def swarm_loop(user_message):

current_agent = agents["triage"]

messages = [{"role": "user", "content": user_message}]

while True:

response = current_agent.run(messages)

if response.handoff_to:

# Agent decided to hand off to a specialist

current_agent = agents[response.handoff_to]

messages.append({"role": "system",

"content": f"Transferred from {response.agent_name}. Context: {response.summary}"})

elif response.done:

return response.final_answer

else:

messages.append(response.message)Agent Communication

How agents share information is as important as how they reason. Key approaches:

| Method | How It Works | Best For |

|---|---|---|

| Direct messaging | One agent passes output directly to another | Pipelines, supervisor → worker |

| Shared memory | All agents read/write to a shared state store | Collaboration on the same artifact |

| Blackboard | Central "board" where agents post findings | Research, multi-perspective analysis |

| Event-driven | Agents subscribe to events and react | Async workflows, microservice-style |

Challenges & Pitfalls

- Cost explosion — N agents × M steps × token cost adds up fast. Budget carefully.

- Error propagation — one agent's mistake cascades to all downstream agents. Build validation between stages.

- Debugging difficulty — tracing a bug across 5 agents with separate conversations is painful. Invest in logging.

- Coordination overhead — agents may duplicate work, contradict each other, or deadlock. Clear protocols prevent this.

- Latency — sequential multi-agent pipelines multiply response time. Parallelize where possible.

Test Yourself

Name and describe 4 multi-agent patterns.

When should you use multi-agent vs. single agent?

What's the biggest risk of multi-agent systems?

How does the debate pattern improve accuracy?

Compare shared memory vs. direct messaging for agent communication.

Interview Questions

Design a multi-agent system for automated code review. What agents would you include?

How would you handle a scenario where two agents in a multi-agent system disagree?

What are the cost implications of a 5-agent pipeline vs. a single agent, and how would you optimize?